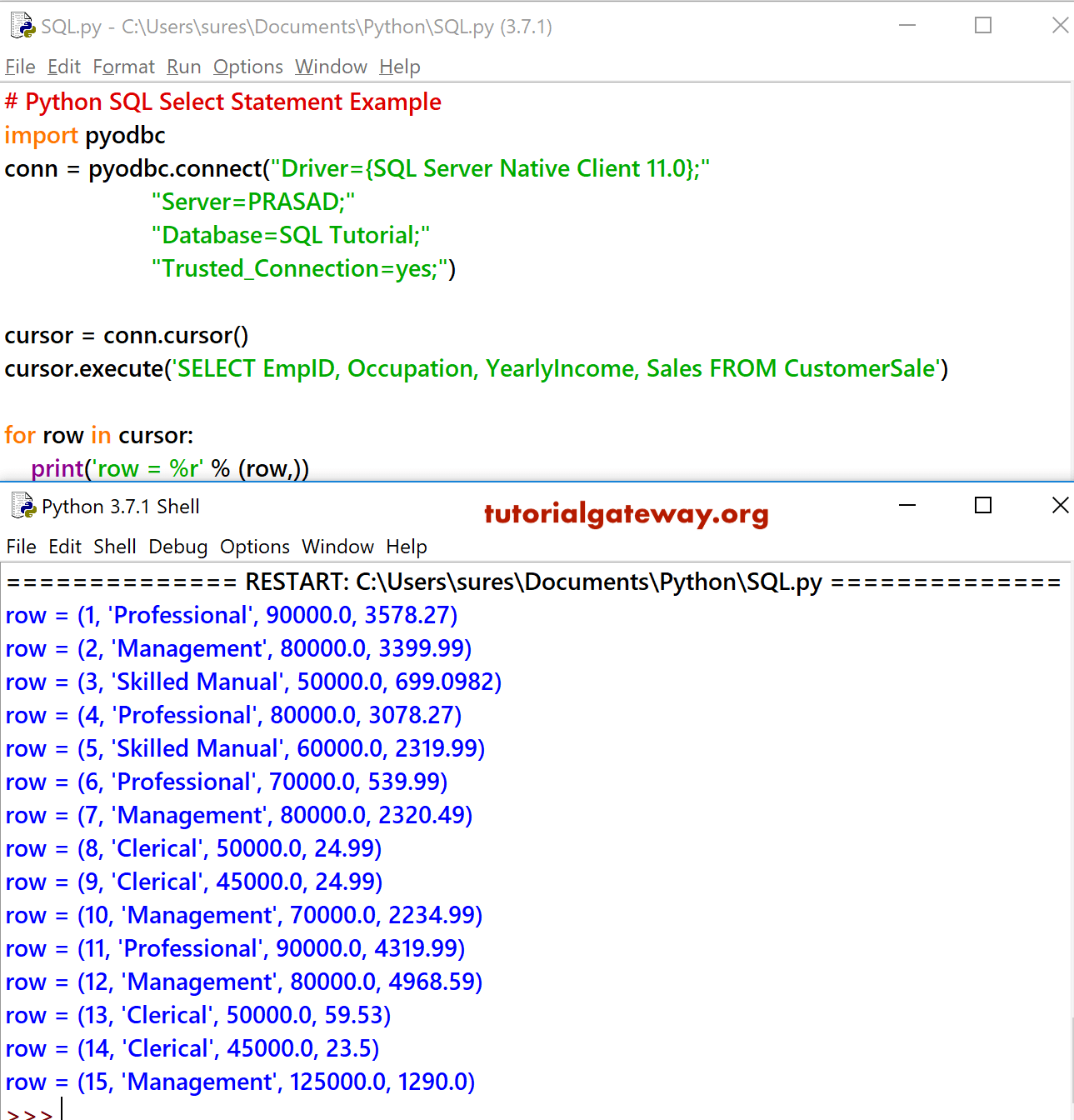

The first two rows of the database table are displayed.Ĭreate a file named pyodbc-test-cluster.py. The format of the string is as follows. With the above information, a special string has to be created, which will be passed to the connect () function of the pyodbc library.

#Pyodbc sql server connection string driver#

Run the pyodbc-test-cluster.py file with your Python interpreter. To connect to a Microsoft SQL Server, we first need a few details about the server: the driver name, the server name, and the database name. To speed up running the code, start the cluster that corresponds to the Host(s) value in the Simba Spark ODBC Driver DSN Setup dialog box for your Databricks cluster. execute ( f "SELECT * FROM LIMIT 2 \n " ) for row in cursor. connect ( "DSN=Databricks_Cluster", autocommit = True ) # Run a SQL query by using the preceding connection. table_name = "" # Connect to the Databricks cluster by using the # Data Source Name (DSN) that you created earlier. Import pyodbc # Replace with the name of the database table to query. In the HTTP Properties dialog box, for HTTP Path, enter the HTTP Path value from the Connection Details tab your SQL endpoint, and then click OK. Password: The value of your personal access token for your SQL endpoint.Host(s): The Server Hostname value from the Connection Details tab your SQL endpoint.

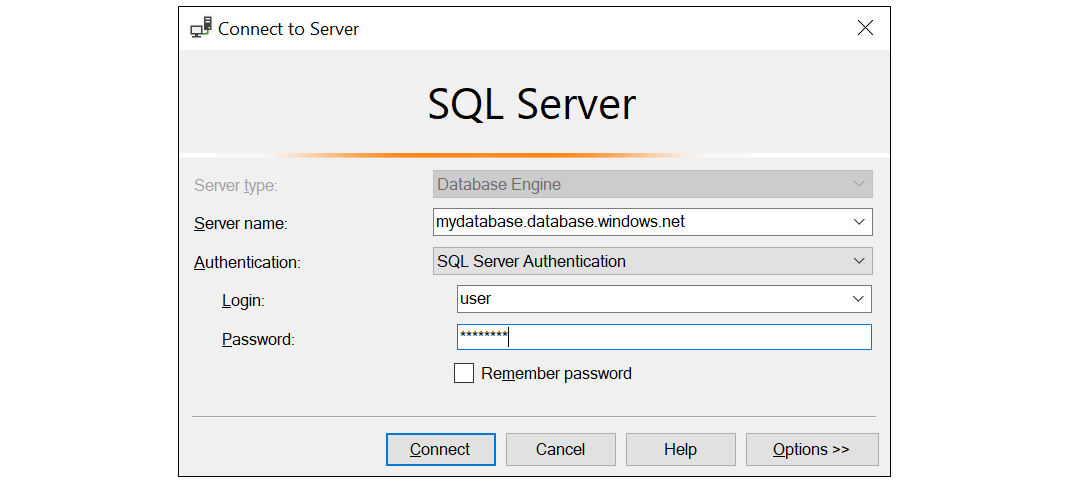

In the Simba Spark ODBC Driver dialog box, enter the following values:.In the Create New Data Source dialog box, click Simba Spark ODBC Driver, and then click Finish. In the ODBC Data Sources application, on the User DSN tab, click Add.To specify connection details for a SQL endpoint: To allow pyodbc to switch connections to a different cluster, repeat this procedure with the specific connection details. In the SSL Options dialog box, check the Enable SSL box, and then click OK. In the HTTP Properties dialog box, for HTTP Path, enter the HTTP Path value from the Advanced Options, JDBC/ODBC tab for your cluster, and then click OK. Password: The value of your personal access token for your Databricks workspace.Host(s): The Server Hostname value from the Advanced Options, JDBC/ODBC tab for your cluster.Spark Server Type: SparkThriftServer (Spark 1.1 and later).In the Simba Spark ODBC Driver DSN Setup dialog box, change the following values:.Add a data source name (DSN) that contains information about your cluster: start the ODBC Data Sources application: on the Start menu, begin typing ODBC, and then click ODBC Data Sources.To specify connection details for a cluster: Self._connection = nnect(connection_config, **connection_config.Specify connection details for the Databricks cluster or Databricks SQL endpoint for pyodbc to use. Log.debug(" Opening connection to database", self.get_name())Ĭonnection_config = self._config Log.error(" Error while polling database: %s", self.get_name(), str(e)) Log.warning(" Warning while polling database: %s", self.get_name(), str(w)) Log.debug(" Next polling iteration will be in %d second(s)", self.get_name(), polling_period) Polling_period = self._config.get("period", self.DEFAULT_POLL_PERIOD) Log.error(" Cannot init database iterator so exit from main loop", self.get_name()) Log.error(" Cannot connect to database so exit from main loop", self.get_name()) Log.info(" Will reconnect to database in %d second(s)", self.get_name(), reconnect_period) Reconnect_period = self._config.get("reconnectPeriod", self.DEFAULT_RECONNECT_PERIOD) The driver can also be used to access other editions of SQL Server from Python (SQL Server 7.0, SQL Server 2000, SQL Server 2005, SQL Server 2008, SQL Server 2012, SQL Server 2014, SQL Server 2016, SQL Server 2017 and SQL Server 2019). Self._config.get("reconnect", self.DEFAULT_RECONNECT_STATE): In the example session shown here, we used pyodbc with the SQL Server ODBC driver to connect Python to a SQL Server Express database.